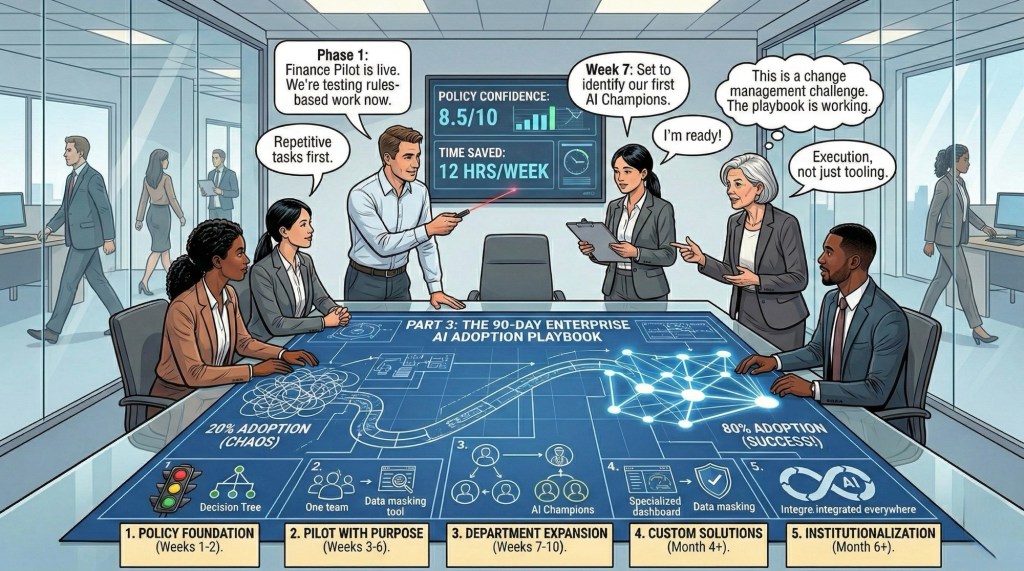

In Part 1, I explained why enterprises are stuck at 20% utilization despite massive investment.

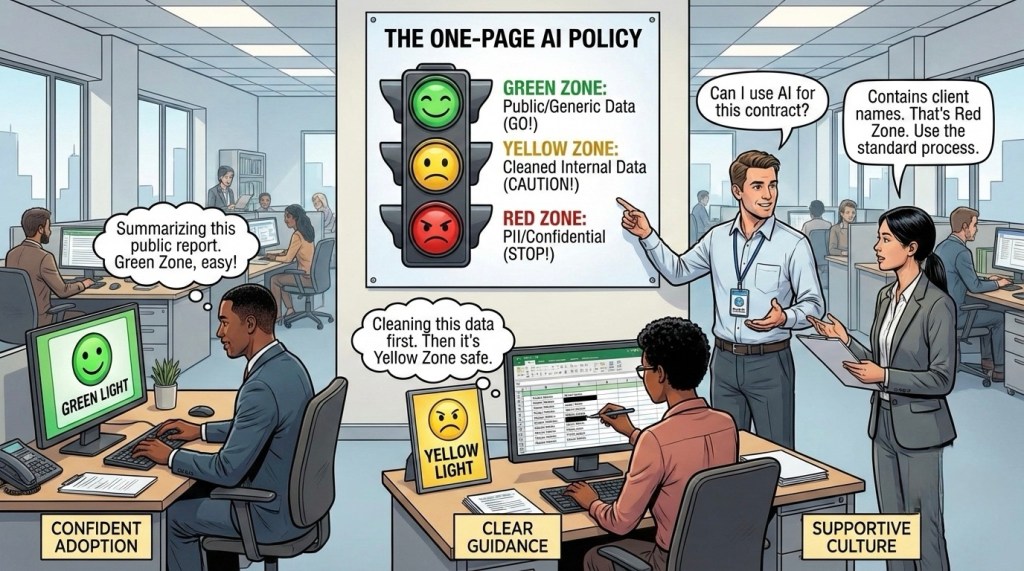

In Part 2, I shared the GREEN/YELLOW/RED policy framework that creates confident adoption.

Today, I’m giving you the exact 90-day rollout plan to move the needle to 80%.

This isn’t theory; this is the implementation playbook working at scale right now.

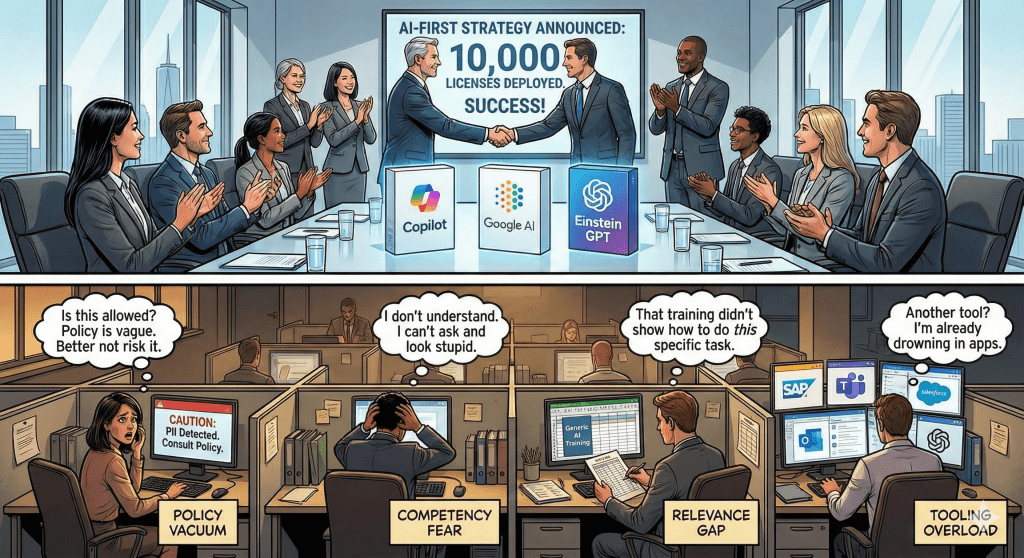

The Sequence Error

Most enterprises fail because they treat adoption as a technology problem whereas it is actually a change management challenge.

If you just deploy the tool, announce it & provide generic training than you will keep on wondering why nobody uses it. Instead we should be going about it in the correct sequence as below.

The right sequence:

- Build policy clarity (The Foundation)

- Pilot with one high-impact team

- Create role-specific solutions

- Enable peer-to-peer transfer

- Scale systematically

The 5-Phase Framework

Phase 0: Policy Foundation (Weeks 1-2)

Before you train a single employee, establish the “Rules of the Road.” Without policy confidence, an employee will avoid a tool that saves them 10 hours a week if they fear it might get them fired.

Actions:

- Create your GREEN/YELLOW/RED decision tree with 20-30 department-specific examples

- Identify AI Policy Champions (one per major department)

- Establish an #ai-policy-questions channel with a 2-hour response SLA

Success Metric: 80% of employees must correctly categorize 5 common tasks as GREEN/YELLOW/RED before moving to Phase 1.

Why it matters: This single step determines whether your entire rollout succeeds or stalls.

Phase 1: Pilot with Purpose (Weeks 3-6)

Don’t launch enterprise-wide. Start with ONE department that has:

- Clear, measurable pain points

- Repetitive, rules-based work (Finance, Operations)

- Supportive leadership

- High visibility (success creates pull for others)

The Structure:

Week 3: Leadership identifies the top 3 “time-wasters”

- Example: Transaction categorization (12 hrs/week per analyst)

- Example: Vendor invoice matching (8 hrs/week per AP specialist)

- Example: Budget variance commentary (10 hrs/week for managers)

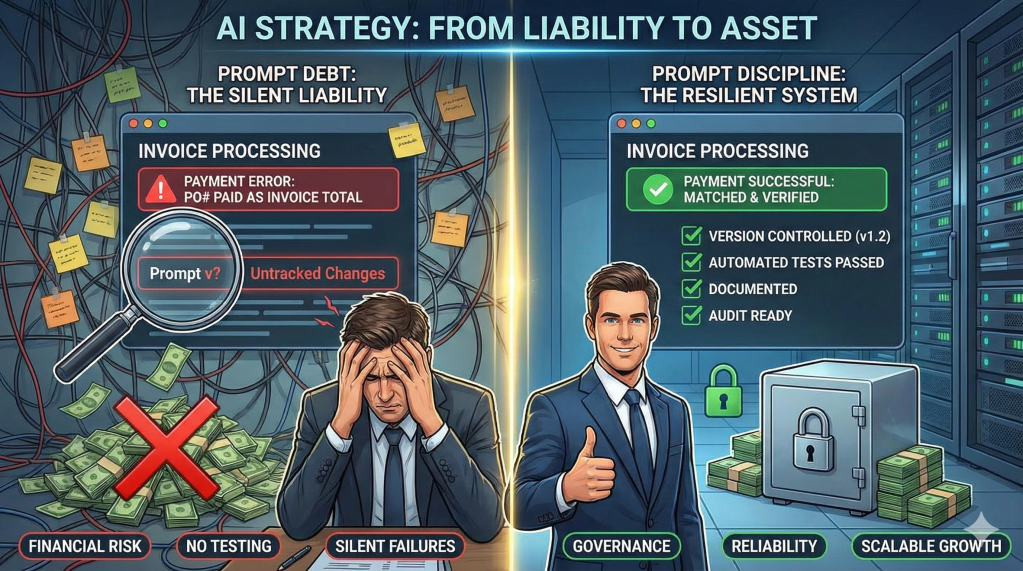

Week 4: Create 3-5 role-specific prompt templates with built-in policy compliance

Template structure:

TASK: [Specific task name]

POLICY ZONE: [GREEN/YELLOW/RED]

BEFORE USING (if YELLOW):

☐ Remove [specific identifiers]

☐ Keep only [specific data points]

PROMPT:

[Exact prompt with placeholders]

Week 5: Test with 10-15 volunteers

- Track time saved, output quality, policy compliance

- Collect feedback and edge cases

- Refine templates based on real usage

Week 6: Volunteers demonstrate results to full team

- Critical messaging: “Here’s how I saved 8 hours this week AND stayed within policy”

- Show before/after workflows

- Leadership reinforces this is encouraged, not risky

Success Metrics:

- 80%+ of pilot users actively using AI 3x/week

- Measurable time savings documented

- Zero policy violations

- Policy confidence score 8+

The Proof: Success here creates the “pull” effect for the rest of the company.

Phase 2: Department-Wide Expansion (Weeks 7-10)

Week 7: Identify 2-3 “AI Champions” from your pilot group

These aren’t necessarily the most technical people; they are:

- The most enthusiastic advocates

- Those who demonstrated good policy judgment

- Those with peer credibility

Give Champions:

- 2 hours/week dedicated time for peer support

- Direct line to AI Policy Contact for escalations

- Recognition in leadership communications

- Early access to new AI features

Week 8-9: Roll out to full department

- Share updated prompt library (refined from pilot feedback)

- Host weekly “office hours” in Slack (Champions + Policy Contact)

- Track adoption metrics in real-time

- Continue collecting edge cases for policy refinement

Week 10: Department leadership presents results to executives

- Time saved per employee (specific hours/week)

- Tasks automated or accelerated

- Employee satisfaction improvement

- Policy compliance record (zero violations)

- ROI calculation (hours saved × average hourly cost)

Success Metrics:

- 60%+ of department actively using AI 3x/week

- Documented productivity gains across multiple roles

- Policy confidence score 8+

- Ready to scale to next departments

Phase 3: Cross-Department Scale (Weeks 11-12+)

Now you have proof of concept. Time to scale.

Identify next 2-3 departments:

- Similar pain points to first pilot (e.g., Operations, HR)

- Different pain points but high potential (e.g., Sales, Customer Success)

- Mix of technical and non-technical teams

For each new department:

Weeks 1-2: Policy + Pain Point Mapping

- Adapt GREEN/YELLOW/RED framework for department-specific scenarios

- Identify top 3-5 time-wasters unique to this function

- Create role-specific prompt library

Weeks 3-4: Pilot with 10-15 volunteers

- Test templates, refine based on feedback

- Build department-specific Champions

Weeks 5-8: Department-wide rollout

- Leverage learnings from first pilot

- Adapt what worked, skip what didn’t

- Create cross-functional Champion network

The Acceleration Effect: Each subsequent rollout is faster because:

- Policy framework is established and proven

- Template creation process is documented

- Champions can learn from each other across departments

- Leadership sees consistent results building confidence

The Network: Connect Champions across departments monthly to share cross-functional wins and emerging best practices.

Phase 4: Custom Solutions (Month 4+)

Once teams are comfortable with basic AI usage, introduce department-level customization. This is where AI moves from a “tool” to “how we work.”

For Finance:

- Custom Copilot pre-loaded with GL codes, vendor master list, fiscal calendar

- Built-in data masking (automatically removes account numbers, employee names)

- Integration with ERP for seamless workflow

For Customer Success:

- Custom Copilot with product documentation, troubleshooting guides, approved response templates

- No access to customer database (policy enforcement by design)

- Integration with ticketing system for context

For Sales:

- Custom Copilot with product catalog, competitive intelligence, pricing guidelines, case studies

- No access to closed-deal pricing or customer contracts

- Integration with CRM for deal context

The Principle: Move from “AI that knows everything” to “AI that knows OUR business within policy bounds.”

Phase 5: Institutionalization (Month 6+)

AI adoption becomes “how we work,” not “that AI initiative.”

1. Onboarding Integration

- Every new hire gets AI + Policy training in Week 1

- Department-specific prompt library shared on Day 1

- Manager demonstrates how they personally use AI in daily work

2. Performance Integration

- Managers ask in 1-on-1s: “What tasks are you still doing manually that AI could handle?”

- AI efficiency gains considered in performance reviews (not as requirement, but as development opportunity)

- Teams share AI wins in weekly standups

3. Continuous Improvement

- Quarterly policy review (update GREEN/YELLOW/RED based on learnings and new capabilities)

- Monthly prompt library updates (new templates based on team discoveries)

- AI Champion network meets monthly to share cross-department innovations

4. Measurement Discipline

- Track active weekly users by department

- Measure time saved per common task

- Monitor policy confidence scores

- Calculate ongoing ROI (hours saved × average hourly cost)

The Metrics That Matter

Stop tracking vanity metrics like “Number of Licenses.” Track the indicators of a healthy system:

Leading Indicators (tell you if adoption will stick):

- Policy Confidence Score: Can your team identify a “Red Zone” task in under 60 seconds? (Target: 8+/10)

- Task Categorization Accuracy: Can 80%+ correctly categorize common tasks as GREEN/YELLOW/RED?

- Time-to-Policy-Answer: How fast does your policy team resolve a “Can I use AI for this?” query? (Target: Under 2 hours)

- Active Champions per 100 employees: Do you have sufficient peer support? (Target: 1-2 per 100)

Lagging Indicators (tell you if it’s working):

- Active Weekly Users with Workflow Impact: Are they using it to solve specific, documented workflow pains? (Target: 60-80%)

- Hours Saved per Employee per Week: Varies by role, but should be measurable and documented

- Policy Compliance Rate: Near-zero violations indicates both adoption AND safety (Target: <0.1% incident rate)

- Employee Satisfaction with AI Tools: Survey-based measurement (Target: 8+/10)

Business Impact Indicators:

- Process Time Reduction: Month-end close, proposal generation, ticket resolution times

- Cost Savings: Overtime reduction, headcount avoidance, efficiency gains

- Quality Improvements: CRM data completeness, customer satisfaction scores, error rates

- Revenue Impact: More selling time, faster deal cycles, improved win rates

Summary: The 30-60-90 Day Action Plan

Days 1-30:

- Week 1-2: Build GREEN/YELLOW/RED policy framework with examples

- Week 3: Select pilot department, identify top pain points

- Week 4: Create role-specific prompt templates with policy guardrails

Days 31-60:

- Week 5-6: Run pilot with volunteers, refine templates based on real usage

- Week 7: Identify AI Champions from pilot group based on enthusiasm and judgment

- Week 8: Begin department-wide rollout with Champion support

Days 61-90:

- Week 9-10: Complete first department rollout, measure and document results

- Week 11-12: Begin rollout to 2nd and 3rd departments using proven framework

- Week 12: Present comprehensive results to leadership, plan continued scaling

Month 4+:

- Deploy custom solutions for high-adoption teams

- Integrate AI into onboarding and performance management

- Establish continuous improvement rhythm with quarterly reviews

The Leadership Commitment Required

This playbook only works if leadership commits to:

1. Time Allocation

- Teams need 2-3 hours/week in Month 1 to learn without it being “extra work”

- Champions need 2 hours/week ongoing to support peers

- This isn’t “in addition to” their job; it IS part of their job

2. Visible Sponsorship

- Executives demonstrate AI usage in their own meetings

- Managers share their personal workflows with teams

- Leadership celebrates wins publicly and regularly

3. Policy Support

- 2-hour response SLA for policy questions (non-negotiable)

- Quarterly policy reviews to adapt to new capabilities

- Zero tolerance for policy violations, but also zero punishment for good-faith questions

4. Measurement Discipline

- Track the metrics that matter, not vanity metrics

- Review adoption progress monthly with leadership

- Adjust strategy based on data, not assumptions

5. Failure Tolerance

- Not every AI experiment will work; that’s expected and acceptable

- “We tried AI for this task and it didn’t help” is a valid, valuable outcome

- Focus on learning and iteration, not perfection

The Bottom Line

The gap between 20% and 80% adoption isn’t about technology; it’s about System Design.

The enterprises that win in 2026 won’t be the ones with the best tools. They’ll be the ones with the best adoption architectures:

✅ Clear policy that creates confidence

✅ Practical training that solves real problems

✅ Real support when employees have questions

✅ Systematic rollout that builds on proven wins

✅ Continuous improvement that adapts as teams learn

Your AI investment is already made. The tools are already deployed.

Now The question is “Do we have the discipline to make our existing investment work?”

90 days from now, you can be showing your board a 60-80% adoption rate with measurable ROI. Or you can still be wondering why your multi-million dollar investment is sitting idle.

What’s your biggest challenge in rolling out AI adoption at your organization?

This is Part 3 of a 3-part series on Enterprise AI Adoption.

Missed earlier parts?

- [Part 1: The AI Adoption Paradox – Why Everyone Has a License but Nobody Has a Clue]

- [Part 2: From “Clueless” to Confident – The GREEN/YELLOW/RED AI Policy]

Subscribe to The Abhay Perspective here & on LinkedIn for weekly frameworks on building AI-enabled operations.

Leave a comment